Synthetic data is increasingly being used to train AI systems, but it raises serious questions about privacy, legal compliance, and fairness. While it aims to protect consumer data by replacing real records with artificial ones, risks like re-identification, bias, and regulatory scrutiny persist. For example:

- Re-identification Risks: Even "fully synthetic" data can be linked back to individuals through advanced attacks.

- Bias: Synthetic datasets often inherit biases from their source, leading to unfair AI outcomes.

- Legal Challenges: Laws like the CCPA and UCPA treat synthetic data differently, creating compliance confusion. In California, synthetic data must meet strict deidentification standards, while Utah explicitly excludes it from personal data regulations.

To mitigate these risks, organizations must implement privacy safeguards like Differential Privacy, conduct audits, and maintain clear documentation. However, balancing data utility with privacy remains a key challenge.

In short, synthetic data isn’t a foolproof solution to privacy concerns. Businesses must navigate complex laws, address risks proactively, and ensure consumer protection to avoid fines and reputational damage.

Privacy and Synthetic Data: The Good, The Bad, and The Ugly

sbb-itb-a8d93e1

What Is Synthetic Data?

Synthetic data refers to information created by algorithms and models, including generative AI. Unlike real-world data, which is gathered from actual events or observations, synthetic data is completely fabricated. It’s designed to replicate the statistical properties and patterns of real data without containing any actual records.

The creation process often involves generative modeling. Here, an AI algorithm analyzes a dataset’s patterns and produces new data points based on the learned probability distribution. This approach has gained traction in various industries. Fernando Lucini, Global Data Science and ML Engineering Lead at Accenture, explains:

"Synthetic data is artificially generated by an AI algorithm that has been trained on a real data set. It has the same predictive power as the original data but replaces it rather than disguising or modifying it."

One of the key differences between synthetic data and traditional anonymization methods is that synthetic data doesn’t retain a direct connection to real individuals. Unlike de-identification, which removes or masks identifiable information from real records, synthetic data replaces the original records entirely. The result is data that maintains the same structure, granularity, and predictive capabilities as the original, but without exposing personal information.

A notable example comes from 2021, when the National Institutes of Health collaborated with Syntegra to create a synthetic version of a COVID-19 patient database. This dataset, which included information on over 2.7 million screened individuals and 413,000 positive cases, mirrored the statistical properties of the original. Researchers worldwide were able to use it for vaccine and treatment development without risking patient privacy.

Let’s now explore how fully synthetic data differs from partially synthetic data, particularly in terms of privacy implications.

Fully Synthetic vs. Partially Synthetic Data

Synthetic data can be divided into two main categories, each with distinct privacy implications.

- Fully Synthetic Data: This type of data is entirely generated, meaning none of the original dataset’s records are included. Every variable is artificial, offering a high level of anonymity. Fully synthetic data is often considered non-personal and may not fall under regulations like the CCPA, provided re-identification is impossible.

- Partially Synthetic Data: This approach blends real and synthetic elements. Sensitive information, such as Social Security numbers or medical diagnoses, is replaced with artificial values, while non-sensitive variables remain unchanged. While this method retains more utility for analysis, it carries a higher risk of re-identification because parts of the original data are still present.

For example, the U.S. Census Bureau uses fully synthetic data to produce detailed workforce maps while ensuring respondent confidentiality.

However, even fully synthetic data isn’t entirely risk-free. Overfitting during the generation process can inadvertently increase the likelihood of re-identification. This creates a balancing act: the closer synthetic data mimics the original, the more useful it becomes, but the risk of privacy breaches also rises.

Synthetic Data and US Consumer Protection Laws

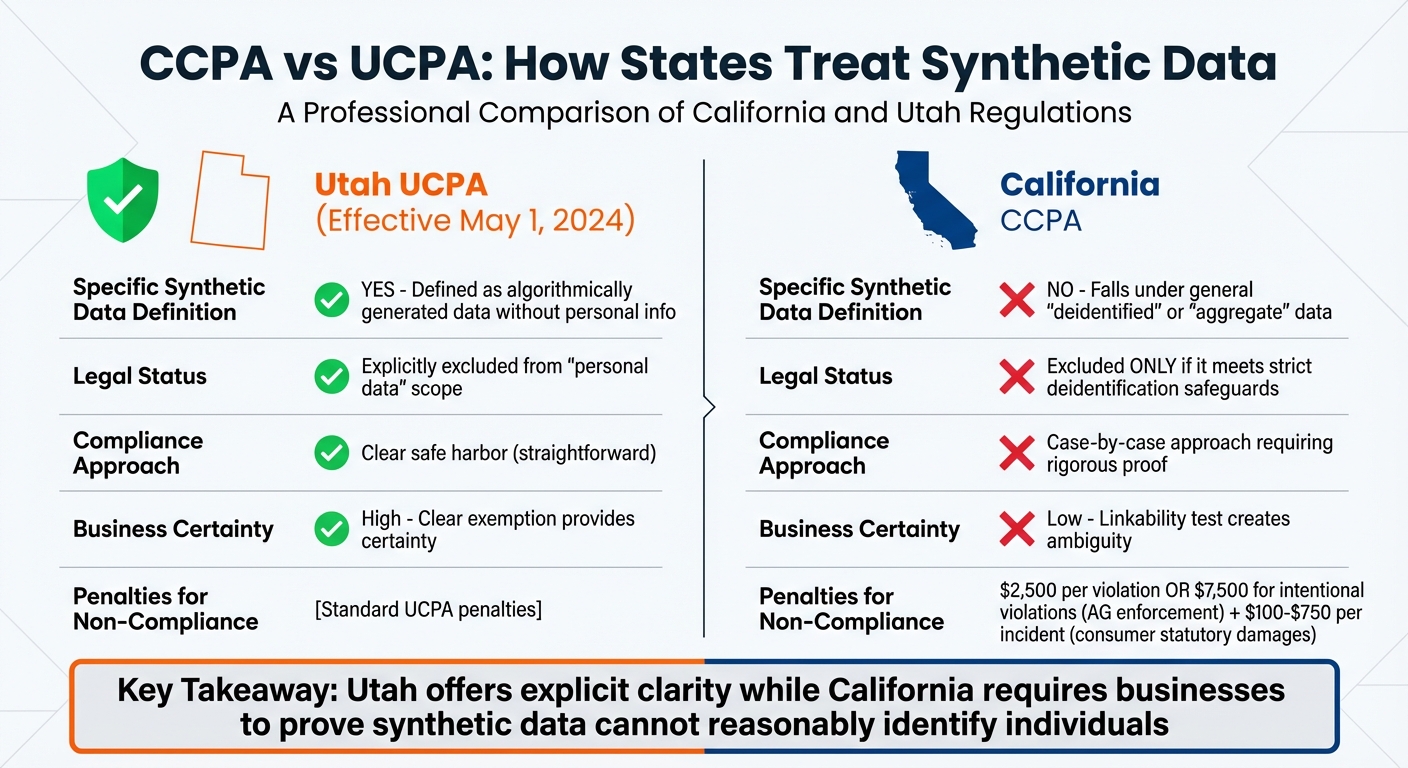

CCPA vs UCPA Synthetic Data Regulations Comparison

In the U.S., the legal treatment of synthetic data depends heavily on state-specific laws and how the data is generated. These factors shape the obligations businesses face and the rights granted to consumers.

CCPA and Synthetic Data

Under the California Consumer Privacy Act (CCPA), personal information is broadly defined as data that "identifies, relates to, describes, is capable of being associated with, or could reasonably be linked, directly or indirectly, with a particular consumer or household." That word – "reasonably" – makes the classification of synthetic data tricky.

The CCPA doesn’t explicitly reference synthetic data. Instead, it uses a linkability test. If synthetic data can reasonably be connected to a real individual or household, it’s considered personal information. As Rocklaw PLLC explains:

"Synthetic data derived from personal information may remain personal information if linkable to individuals."

To avoid falling under CCPA obligations, businesses must ensure their synthetic data meets deidentification standards. This involves implementing technical measures to prevent re-identification, publicly committing to keeping the data deidentified, and requiring contracts that prohibit re-identification attempts by anyone who receives the data. Non-compliance can lead to serious penalties: the California Attorney General may impose fines of $2,500 per violation or $7,500 for intentional violations, while consumers can pursue statutory damages ranging from $100 to $750 per incident for negligent breaches.

Some states, however, take a more straightforward approach, like Utah.

UCPA and Synthetic Data Exemptions

Starting May 1, 2024, Utah’s UCPA explicitly excludes synthetic data from its definition of personal data, classifying it as deidentified. This gives businesses operating in Utah much-needed clarity. Ryan Dowell and Natasha G. Kohne of Akin Gump highlight:

"By clarifying the status of synthetic data, the amended UCPA provides certainty for organizations to use synthetic data and comply with Utah’s privacy law."

The difference between California and Utah is stark. While Utah offers a clear safe harbor, California takes a more cautious, case-by-case approach, requiring rigorous proof of deidentification. This fragmented legal landscape poses challenges for companies navigating privacy laws across multiple states.

| Feature | Utah (UCPA) | California (CCPA) |

|---|---|---|

| Specific Synthetic Data Definition | Yes; defined as algorithmically generated data without personal info. | No; falls under general "deidentified" or "aggregate" data. |

| Legal Status | Explicitly excluded from "personal data" scope. | Excluded only if it meets strict deidentification safeguards. |

| Effective Date of AI Amendments | May 1, 2024 | January 1, 2026 (for training transparency) |

Key Consumer Protection Issues with Synthetic Data

These challenges highlight the tough balancing act between ensuring data is useful for AI and protecting consumer privacy under current U.S. laws. While synthetic data is often promoted as a solution to privacy concerns, it brings its own set of risks that can directly impact consumers.

Re-identification Risks

Synthetic data doesn’t guarantee complete anonymity. Even when it’s generated from scratch, it can still fall prey to advanced attacks. For instance, membership inference attacks can reveal whether a specific individual’s data was part of the original training set. Attribute inference attacks can uncover personal traits, and reconstruction attacks may rebuild the original training records if the AI model memorizes specific cases instead of identifying broader patterns.

Attorney Mark D. Metrey of Hudson Cook LLP cautions:

"The age of synthetic data has not eliminated privacy risk. Instead, it has introduced new complexities and heightened scrutiny as the lines between real and generated data continue to blur."

Adding to the problem, privacy metrics like the Distance to Closest Record (DCR) often fall short. These tests may fail to identify actual privacy leaks, leaving datasets vulnerable to attacks like membership inference.

But privacy isn’t the only issue. Synthetic data can also carry over biases from the original datasets.

Bias and Discrimination Concerns

Synthetic data often inherits the biases embedded in its source material. If the original dataset underrepresents certain groups or reflects historical discrimination, the synthetic data can perpetuate – or even amplify – these inequalities. This can lead to AI systems making unfair decisions in areas like hiring, lending, healthcare, or law enforcement.

Attempts to improve privacy through methods like suppressing small cell counts or removing outliers can unintentionally exclude minority groups from analysis. This exclusion reinforces existing social disadvantages. Even when synthetic records are entirely fabricated, if someone wrongly believes they’ve identified a real person and learns sensitive information about them, privacy harm can still occur.

While re-identification and bias are pressing concerns, another major challenge lies in balancing the usefulness of data with privacy protections.

Data Utility vs. Privacy Trade-offs

One of the biggest hurdles with synthetic data is finding the right balance between data utility and privacy. The closer synthetic data resembles real data, the more likely it is that private information can be reverse-engineered.

Organizations face a tricky trade-off. High-quality synthetic data is crucial for building effective AI systems, but preserving the statistical properties needed for accuracy often increases the risk of making individuals identifiable. Rocklaw PLLC captures this dilemma well:

"Synthetic data involves fundamental trade-offs between utility maintaining statistical properties needed for purposes and privacy ensuring individuals cannot be identified or inferred."

With Gartner forecasting that synthetic data will dominate AI training datasets by 2030, the pressure to balance these competing priorities is mounting. Failure to manage this balance could lead to severe privacy breaches.

Legal Compliance Challenges for Synthetic Data

When it comes to synthetic data, legal compliance adds another layer of complexity to the privacy concerns already discussed. The crux of the issue is that U.S. privacy laws were designed for "collected" data, not datasets that are artificially generated. This misalignment creates a gray area where compliance obligations and consumer rights are often unclear.

Data Subject Rights and Synthetic Data

Determining whether consumer rights apply to synthetic data is far from simple. Under the California Consumer Privacy Act (CCPA), synthetic data that remains "reasonably linkable" to an individual or household still qualifies as personal information. This means consumers retain their rights to access, delete, and correct this data.

Companies bear the burden of proving that their synthetic or de-identified data cannot reasonably be used to identify individuals. This is no small task. In 2019, researchers managed to re-identify 99.98% of individuals in a supposedly de-identified U.S. dataset using just 15 demographic attributes. This highlights how challenging it can be to ensure true anonymity.

Partially synthetic datasets are especially tricky. Their mix of real and artificial data increases the risk of re-identification. If such datasets can be traced back to individuals, they revert to being classified as personal information, reinstating all associated consumer rights.

Biometric data adds yet another layer of complexity. A notable example is Rivera v. Google Inc., where an Illinois federal court allowed claims under the Biometric Information Privacy Act (BIPA) to proceed. The case involved facial templates generated from user images, even though the templates weren’t directly linked to names. This shows that even de-identified biometric data can trigger legal obligations, a risk that extends to synthetic biometric datasets.

Regulatory Scrutiny and DPIAs

Regulators are increasingly scrutinizing synthetic data, especially in the context of AI training. Agencies like the California Privacy Protection Agency (CPPA) and the Federal Trade Commission (FTC) are focusing on the "opacity" of synthetic data and whether companies are overstating its privacy protections. This growing oversight reflects concerns about potential misrepresentations regarding anonymization.

To address these risks, organizations are expected to conduct Data Protection Impact Assessments (DPIAs) and maintain thorough documentation of how synthetic data is generated and what privacy safeguards are in place. DLA Piper advises companies to perform "privacy assurance assessments" to evaluate the likelihood of re-identification and the potential exposure of sensitive information.

The CCPA also mandates auditability. This means companies must keep detailed, unalterable logs that show who accessed the original data used to create synthetic datasets and for what purpose.

Contractual obligations add yet another layer of difficulty. Many data licensing agreements restrict "derivative use" or "secondary purposes", which could prohibit generating synthetic datasets from licensed real-world data. Violating these agreements could lead to breach of contract claims in addition to privacy law violations. These restrictions highlight the broader challenge of aligning synthetic data practices with evolving legal and contractual standards.

Mitigation Strategies for Consumer Protection Risks

To address the legal and privacy challenges previously discussed, organizations handling synthetic data must adopt practical measures to safeguard consumers. These strategies focus on tackling re-identification risks, bias, and utility concerns.

Privacy-Enhancing Technologies

Differential Privacy (DP) stands out as a leading method for protecting individual privacy. As Natalia Ponomareva and her colleagues at Google Research explain:

The core promise of DP is intuitive yet powerful: the outcome of a differentially private analysis or data release should be roughly the same whether or not any single individual’s data was included in the input dataset.

DP works by adding calibrated noise during data generation, offering a mathematical safeguard against re-identification. Techniques like DP-SGD (Stochastic Gradient Descent) and DP-FTRL (Follow-The-Regularized-Leader) are often employed alongside user-level contribution limits to minimize any single individual’s impact.

However, it’s essential to note that synthetic data’s effectiveness diminishes when the privacy budget (ε) is set at 4 or lower. Google Research cautions against assuming that synthetic data is inherently private:

A common and dangerous misconception is that synthetic data is inherently private.

Other privacy techniques can complement DP. K-anonymity ensures individuals cannot be uniquely identified within a group, while outlier suppression removes data points that could lead to linkage attacks. Combining these methods with DP enhances protection. Additionally, empirical privacy audits – testing synthetic data against known attack methods like membership inference and reconstruction attacks – can uncover vulnerabilities before deployment.

To further strengthen consumer protection, organizations must also focus on ongoing risk monitoring.

Risk Monitoring and Documentation

Constant vigilance is key to maintaining consumer protections. Organizations should track changes, document the parameters used in data generation, and log safeguards and validation results.

Regular testing is crucial. Techniques like Exact Match, Neighboring Match, and membership inference scoring help ensure that no real records persist and that potential weaknesses are identified. Bias monitoring is equally important since synthetic data can sometimes amplify existing discrimination. As the Information Commissioner’s Office notes:

The more that the synthetic data mimics real data, the greater the utility it has, but it is also more likely to reveal someone’s personal information.

Organizations should adjust their data generation methods as needed to address these risks.

Comprehensive documentation is also critical for operational efficiency and regulatory compliance. Keeping compliance logs that detail validation results, dataset limitations, and intended uses helps demonstrate accountability to regulators. Contracts with third-party vendors should explicitly prohibit re-identification efforts and mandate robust safeguards. Additionally, lineage tracking – recording the data’s origin, the generative models used, and specific DP parameters – creates a reliable audit trail. Finally, validating synthetic data against specific tasks, rather than relying solely on general metrics, ensures that DP-induced noise doesn’t compromise the data’s usability for its intended purpose.

Conclusion

Synthetic data, while innovative, doesn’t escape the reach of consumer protection laws or fully eliminate privacy risks. These challenges highlight the ongoing tension between advancing technology and regulatory compliance. Businesses bear the responsibility of proving that their synthetic data cannot reasonably lead to re-identification, as required by laws like the CCPA, Colorado Privacy Act, and Connecticut Data Privacy Act. With growing scrutiny from regulators like the FTC and state attorneys general on practices such as "anonymization loopholes", synthetic data cannot be treated as a shortcut to compliance.

Striking the right balance between data utility and privacy is crucial. Organizations can reduce risks by employing privacy-focused technologies and maintaining rigorous oversight. Key practices include using differential privacy, conducting thorough re-identification testing, keeping detailed documentation, and implementing robust governance policies. Practical steps, such as sanitizing source data before generating synthetic data, securely deleting original records post-validation, and including contractual clauses that prohibit re-identification, are also essential.

Synthetic data challenges the traditional boundaries of consumer protection. Companies that address risks like re-identification, bias, and regulatory compliance head-on will be better equipped to harness its potential while safeguarding consumer rights. Ignoring these responsibilities could lead to significant consequences, such as fines of up to $7,500 per intentional violation under California law, along with reputational harm and legal exposure. As discussed earlier, consistent monitoring and effective risk management are non-negotiable for organizations navigating this space.

FAQs

How can synthetic data still identify me?

Synthetic data isn’t completely immune to privacy risks. Issues like re-identification, attribute disclosure, or linkability can still arise. These happen when the models or datasets used to generate the synthetic data hold onto bits of original information or if they can be accessed in ways that reveal connections back to real individuals. To address these concerns, implementing strong safeguards is a must.

Does synthetic data count as personal data under the CCPA?

Synthetic data typically does not count as personal data under the California Consumer Privacy Act (CCPA) if it is completely de-identified and cannot be traced back to an individual. However, if there’s a reasonable way to link the data to a specific consumer, it might fall under the category of personal data. To stay compliant with consumer protection laws, implementing robust safeguards is crucial.

How can companies prove synthetic data is safe to share?

Companies can demonstrate that synthetic data is safe to share by ensuring it mirrors the statistical patterns of real data while excluding any actual personal information. This guarantees non-reversibility, meaning the original data cannot be reconstructed, which aligns with privacy regulations. Additionally, showcasing compliance with data protection standards and privacy laws strengthens confidence in its security.